Introducing n1: Yutori's Browser-Use Model

By the Yutori team on January 29, 2026

In November, we introduced Navigator, a state-of-the-art web agent that autonomously navigates websites to complete everyday tasks. At the heart of Navigator is n1, our browser-use model.

Today, we're making n1 available in our API — giving developers access to the best accuracy, pricing, and latency for browser automation among computer-use models.

n1 is optimized for web tasks through extensive mid-training and supervised fine-tuning—and, uniquely, reinforcement learning on real websites rather than just simulated environments—resulting in strong performance across a wide range of real-world web automation tasks.

Table of Contents

State-of-the-Art in Browser-Use

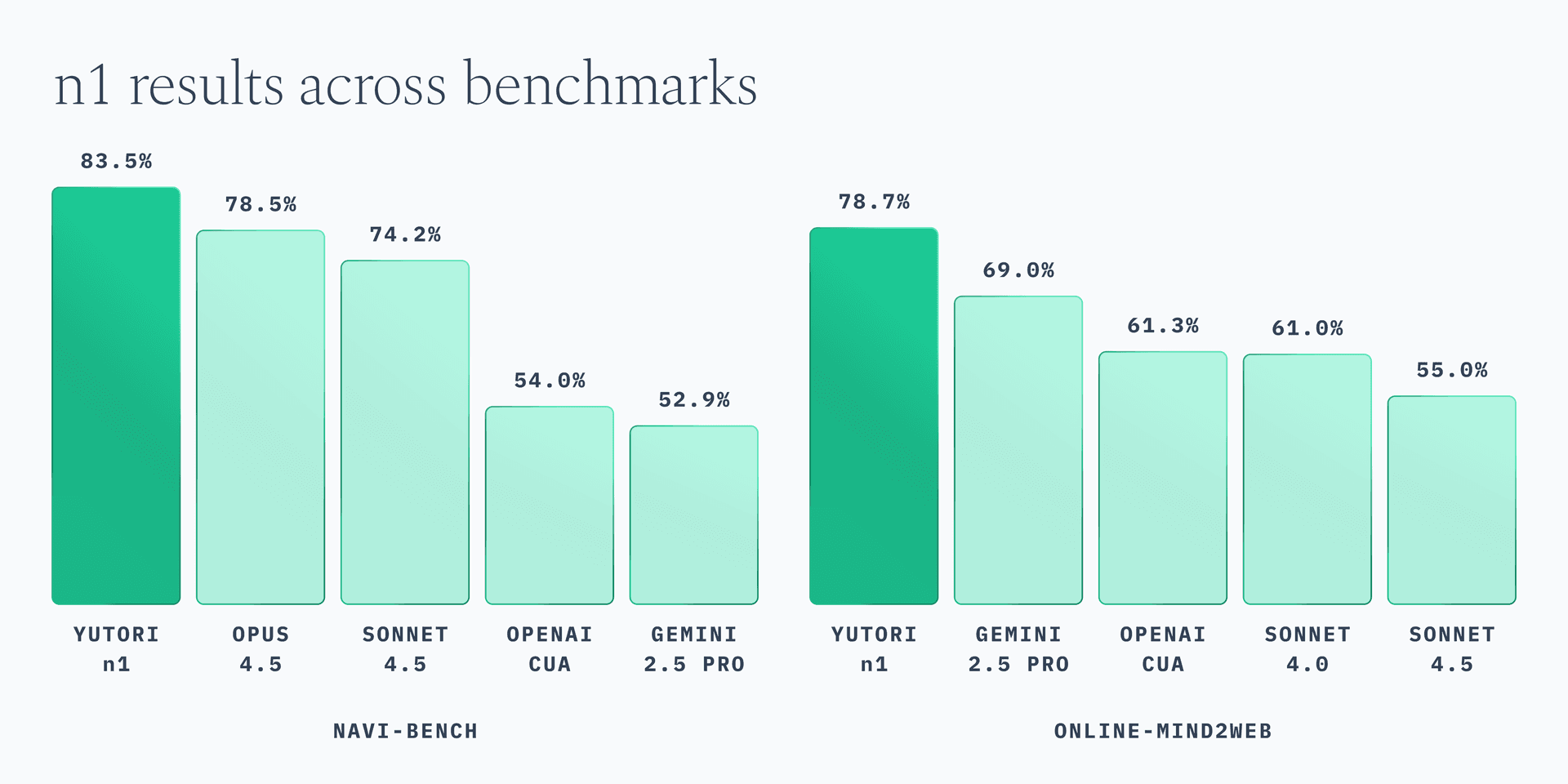

n1 achieves state-of-the-art performance on browser-use benchmarks, outperforming all other computer-use models on both Navi-Bench1 and Online-Mind2Web2. It reaches 83.5% accuracy on Navi-Bench, which comprises of 100 tasks across five real-world websites—Apartments, Craigslist, OpenTable, Resy, and Google Flights—and 78.7% on Online-Mind2Web, a benchmark evaluating real-world web agent performance with 300 tasks spanning 136 popular sites.

Figure 1. n1 achieves state-of-the-art accuracy on browser-use benchmarks.

Browser Automation at Scale

n1 offers the most cost-effective pricing among computer-use models — up to 8x cheaper than alternatives while delivering better accuracy.

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Claude 4.5 Opus | $5.00 | $25.00 |

| Claude 4.5 Sonnet | $3.00 | $15.00 |

| OpenAI Computer-Use | $3.00 | $12.00 |

| Gemini 2.5 Computer-Use | $1.25 | $10.00 |

| Yutori n1 | $0.75 | $3.00 |

Table 1. n1 is the most cost-effective computer-use model.

At $0.75 per million input tokens and $3.00 per million output tokens, n1 makes browser automation accessible at scale.

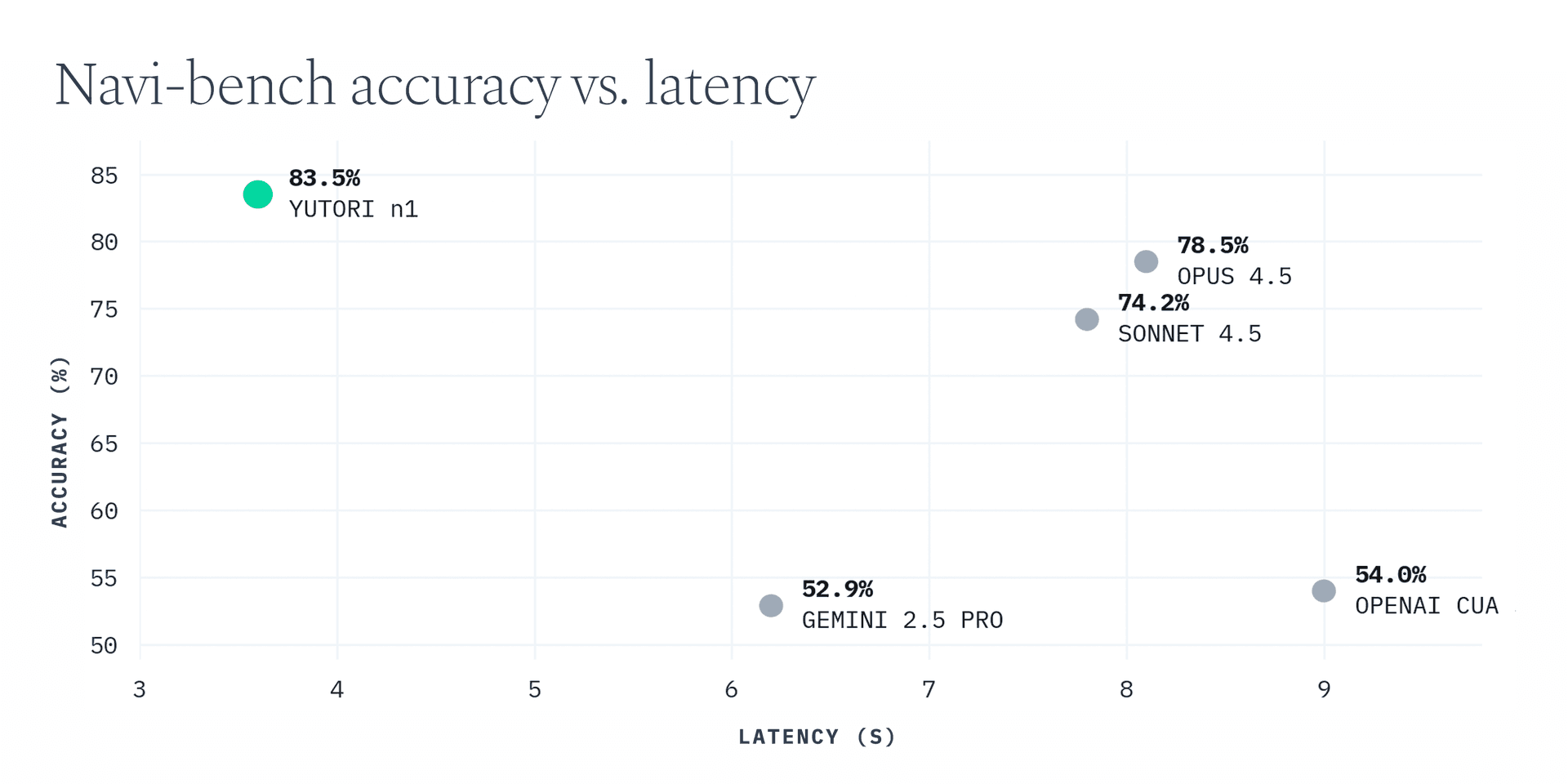

Speed matters too — every second of model latency adds up across the dozens of steps required to complete a web task. n1 delivers the fastest per-step latency among computer-use models.

Figure 2. n1 achieves the lowest per-step latency among computer-use models.

Measured on Navi-Bench under identical conditions with a maximum of 75 steps per task, n1 achieves an average per-step latency of 3.6 seconds — 2.2x faster than Claude 4.5 Opus and 1.7x faster than Gemini 2.5 Computer-Use. Combined with its higher accuracy, n1 completes tasks both faster and more reliably.

Get Started

The n1 API provides direct access to Yutori's browser-use model so you can build your own browser automation pipelines.

We're excited to see what you build with it.

Addendum — March 9, 2026

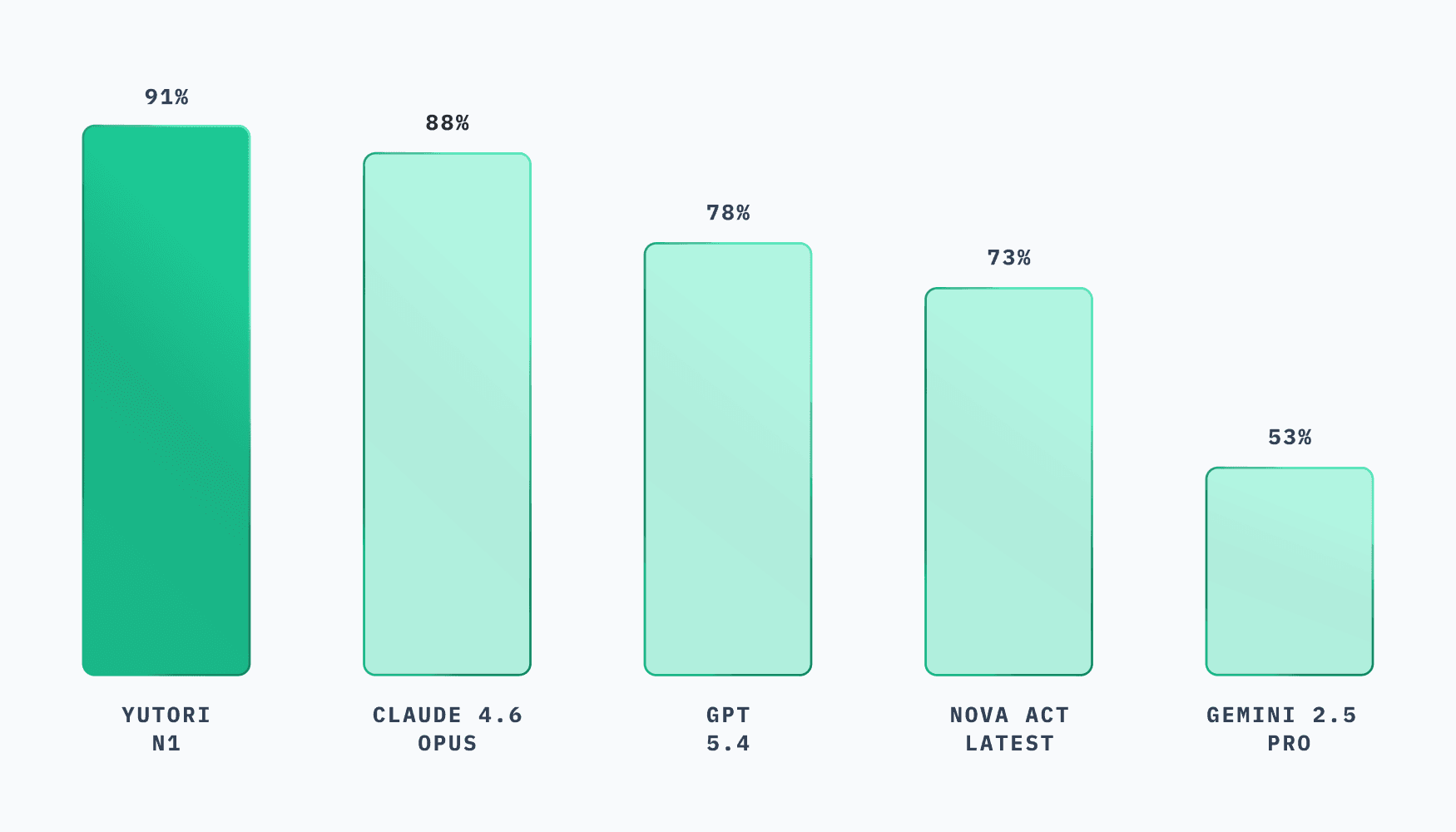

Since this post was published in January 2026, several new frontier models with computer use capabilities have been released. An updated comparison of n1 with other frontier models on Navi-Bench is below.

n1 achieves 91% on Navi-Bench v1, outperforming Claude 4.6 Opus (88%), GPT-5.4 (78%), Nova Act Latest (73%), and Gemini 2.5 Pro (53%).

Navi-Bench — a benchmark for evaluating web agents on real websites with verifiable rewards.